Signal Briefs

Apple’s integration of Google’s Gemini models into Siri has been framed as a major step forward in AI capability. This Signal Brief argues the opposite: the partnership is fundamentally shaped by inference cost constraints, not intelligence leadership. While Gemini enables scalable, efficient responses, its architecture prioritizes speed and cost over deep reading and reasoning. Over time, this tradeoff may introduce subtle but compounding trust risks within Apple’s ecosystem.

1. A Partnership Framed as Progress

Apple’s adoption of Google’s Gemini models has been widely interpreted as a leap forward in AI capability. On the surface, it is — enabling Siri to deliver faster, more conversational responses at scale. But the deeper story is not about intelligence. It is about economics.

2. The Constraint: Inference at Apple Scale

At Apple’s scale, every query carries cost. Billions of requests require predictable latency, minimal compute, and controlled infrastructure exposure. These constraints shape system behavior more than model capability.

3. Why Gemini Fits Apple’s Requirements

Gemini is optimized for efficiency. It prioritizes fast responses, controlled compute usage, and scalable deployment. This makes it a natural fit for Apple’s requirements: reliable, cost-contained intelligence that can operate across billions of devices.

4. The Architectural Tradeoff: Infer First, Read Later

To achieve that efficiency, Gemini often infers from patterns — titles, context cues, and prior knowledge — rather than fully reading or processing every source by default. This reduces latency and cost, but it also changes how answers are generated.

5. When Efficiency Becomes Approximation

At low stakes, this tradeoff is invisible. At higher stakes, it becomes consequential. Answers may appear confident while lacking depth, precision, or full context. These are not frequent failures — but they are meaningful ones.

6. The Emergence of Trust Debt

Small inaccuracies accumulate. Over time, users begin to question not whether the system works — but when it can be trusted. This is not a failure of intelligence. It is a byproduct of optimization.

7. Apple’s Exposure: Brand vs Behavior

Apple’s brand is built on reliability and trust. A system that delivers fast but occasionally shallow answers introduces a subtle tension between expectation and experience. The risk is not immediate — it is cumulative.

8. A Modular Intelligence Stack

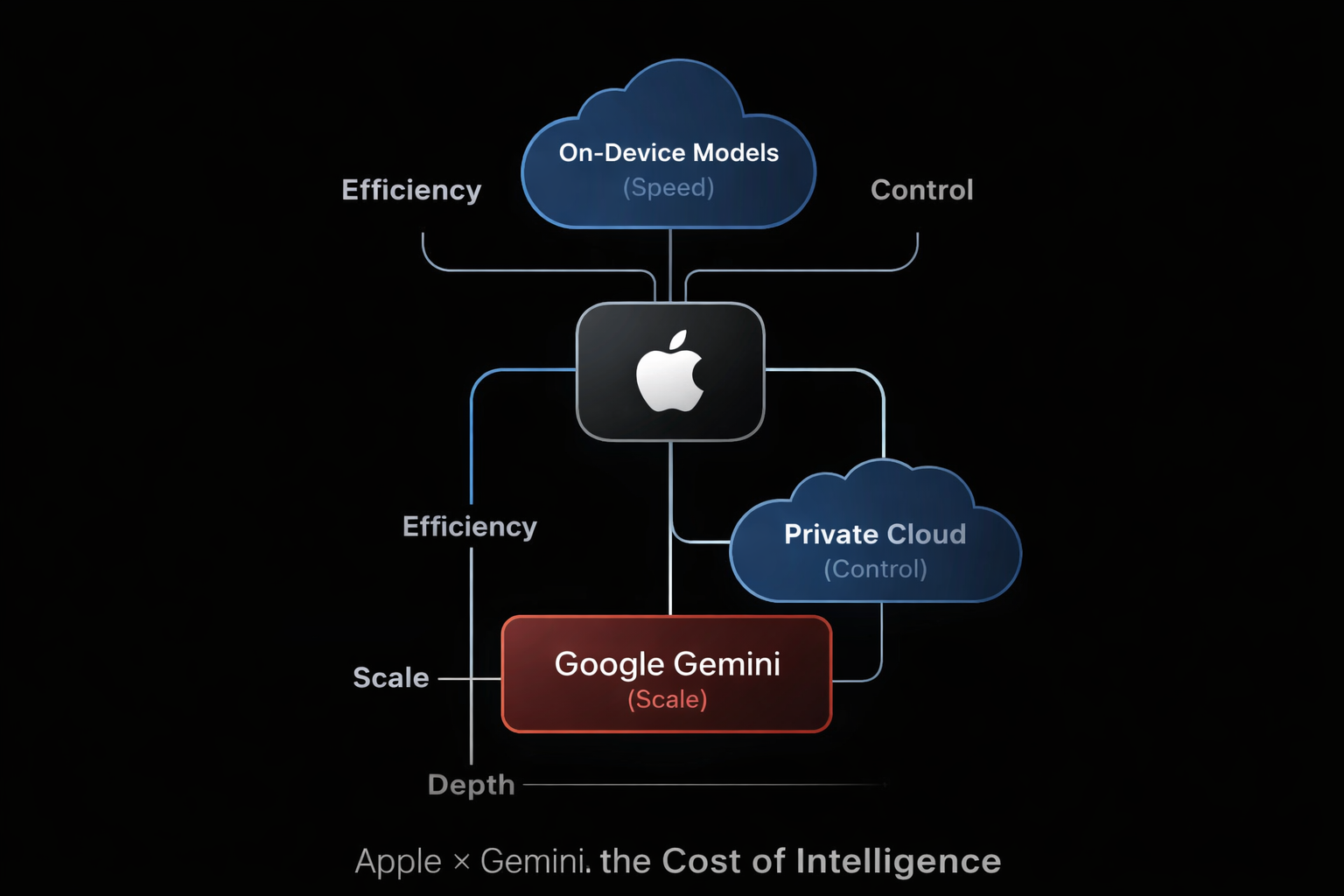

Apple’s architecture reflects this tradeoff:

- lightweight on-device models for speed

- private cloud processing for control

- Gemini for higher-compute tasks

This layered approach maximizes efficiency — but fragments consistency.

9. What This Signals About Apple’s Strategy

Apple has chosen controlled intelligence over maximal intelligence. The goal is not to lead in raw capability, but to deliver a system that is stable, efficient, and integrated within its ecosystem.

10. The Long-Term Question

The Apple × Gemini partnership is not defined by what it enables today, but by what it optimizes for tomorrow. If efficiency continues to outweigh depth, the system may scale — but trust will determine whether it endures.

Read Related Signal Briefs and Frameworks:

Apple x Google x OpenAI: Inference Strategy